Deploy large models at high performance using FasterTransformer on Amazon SageMaker | AWS Machine Learning Blog

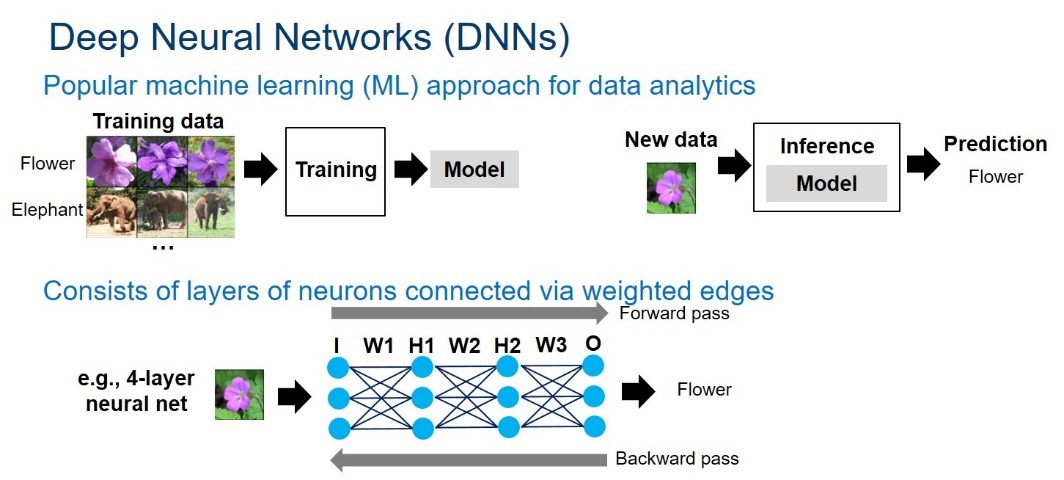

Information | Free Full-Text | Machine Learning in Python: Main Developments and Technology Trends in Data Science, Machine Learning, and Artificial Intelligence

1: Performance of 3D FFTs in MKL and FFTW in double complex arithmetic... | Download Scientific Diagram